|

Marie Pelissier-Combescure I am an engineer and a doctor (PhD) from IRIT in Toulouse, France, specializing in computer vision, graphics, and artificial intelligence. I am currently a Postdoctoral Researcher in Artificial Intelligence & Medical Imaging, within the PINKCC Lab (Precision Imaging as a New Key in Cancer Care), part of the IRCM (Institut de Recherche en Cancérologie de Montpellier), a team led by Stéphanie Nougaret. For the past two years, I have been a Temporary Teaching and Research Assistant (ATER) at Toulouse INP, France." My thesis focused on selecting the optimal viewpoint of a 3D object to maximize visual information and identify the most relevant view — the one that best highlights essential features for object recognition. I approached this problem from two angles: first, through multimodal (2D/3D) processing based on analyzing views from images of the 3D object; and second, by working directly on 3D meshes. Now, my research aimed at improving 3D classification performance using multi-view and multimodal neural networks adapted to 3D data, integrating the evaluation of viewpoint relevance. My supervisors were Géraldine Morin and Sylvie Chambon. |

|

Research |

2025 |

|

|

Postdoctoral Researcher in Artificial Intelligence and Medical Imaging at the Precision Imaging as a New Key in Cancer Care (PINKCC Lab)

INSERM U1194 - Institut de Recherche en Cancérologie de Montpellier, IRCM My current research focuses on ovarian cancer and aims to leverage all available patient data modalities : including CT and MRI imaging, histopathology whole-slide images, genomic profiles, and clinical variables, to improve survival prediction. Rather than treating each modality independently, the project investigates how modality-specific representations can be learned and combined to capture complementary and synergistic information. A central challenge is to determine which modalities to fuse, at what stage of the learning process, and through which fusion strategies, in order to model both intra-modality patterns (e.g. spatial heterogeneity in histology or imaging) and inter-modality relationships (e.g. links between images, genomic alterations, and clinical context). By systematically exploring early, intermediate, and late fusion paradigms, the goal is to design integrative models that better exploit cross-modal interactions and ultimately yield more accurate and robust survival predictions for ovarian cancer patients. |

2024 |

|

|

Analyse de contenus visuels en 2D et en 3D : évaluation de la pertinence d'un point de vue d'un objet 3D

Marie Pelissier-Combescure Thesis defense: June 24, 2024, Toulouse, France Manuscrit

This thesis aims to automatically select the most relevant 2D viewpoint for a given 3D object to facilitate identification and understanding. It quantifies viewpoint relevance by extracting essential object characteristics from available viewpoints. The research explores two main axes: evaluating the relevance of fixed viewpoints from textured images using geometric attributes and photographic recommendations, and determining the most representative viewpoint of an untextured 3D mesh by analyzing visible surface and intrinsic saliency based on viewing angle.

|

|

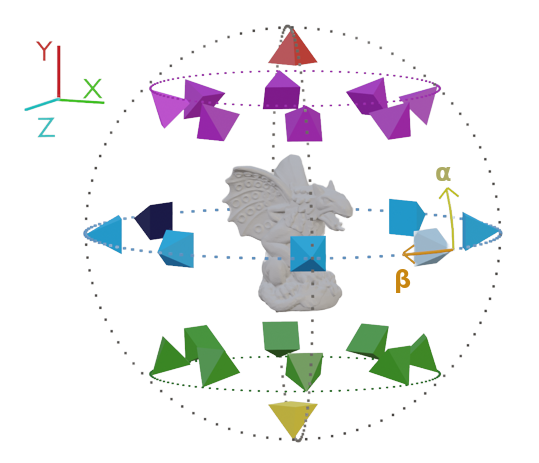

Most Relevant Viewpoint of an Object: a View-Dependent 3D Saliency Approach

Marie Pelissier-Combescure, Sylvie Chambon, Géraldine Morin VISAPP International Conference on Computer Vision Theory and Applications, 2024, Rome, Italy HAL

This paper introduces a new method for selecting the most relevant viewpoint of a 3D object, aiming to showcase the object effectively rather than focusing on aesthetics. The approach combines visibility and view-dependent saliency, incorporating factors like visible surface size and the saliency of visible vertices. A key contribution is an extensive evaluation protocol that includes a user study with over 200 participants and an analysis of image datasets to align with human preferences. The method outperforms existing techniques in selecting viewpoints that are most similar to human choices, even offering informative views where human biases might differ.

|

2023 |

|

|

Most Relevant Viewpoint of an Object: a View-Dependent 3D Saliency Approach

Marie Pelissier-Combescure, Sylvie Chambon, Géraldine Morin J.FIG Les journées Françaises de l'Informatique Graphique, 2023, Montpellier, France HAL

This paper presents a method for automatically selecting the most relevant 2D viewpoint of a 3D object by quantifying visible "essential object characteristics" rather than aesthetics. This is a preliminary result of the main paper published at VISAPP 2024, mentioned above.

|

|

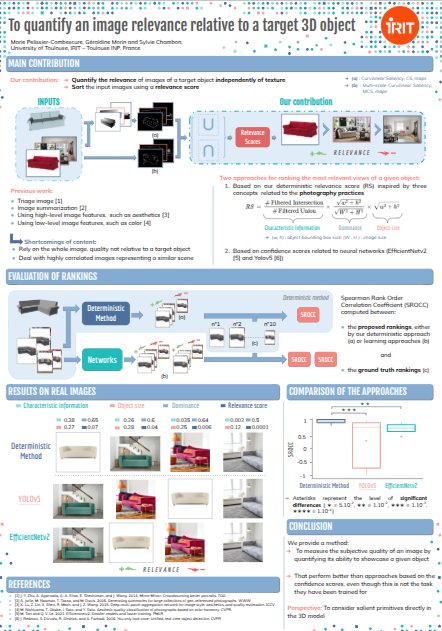

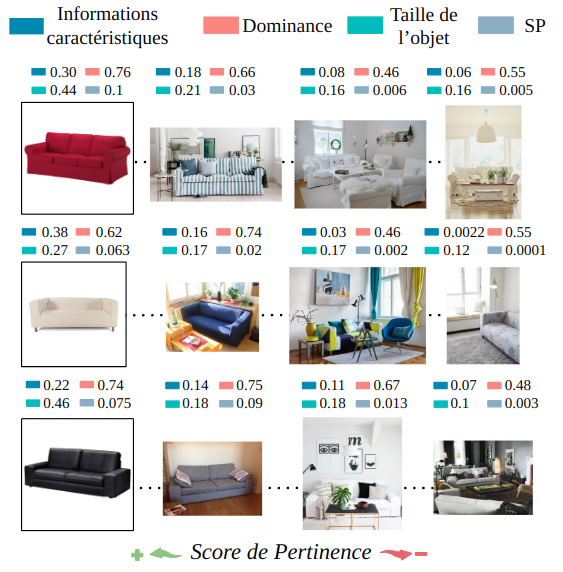

To quantify an image relevance relative to a target 3D object

Marie Pelissier-Combescure, Géraldine Morin, Sylvie Chambon SCIA The Scandinavian Conference on Image Analysis, 2023, Levi, Finland HAL

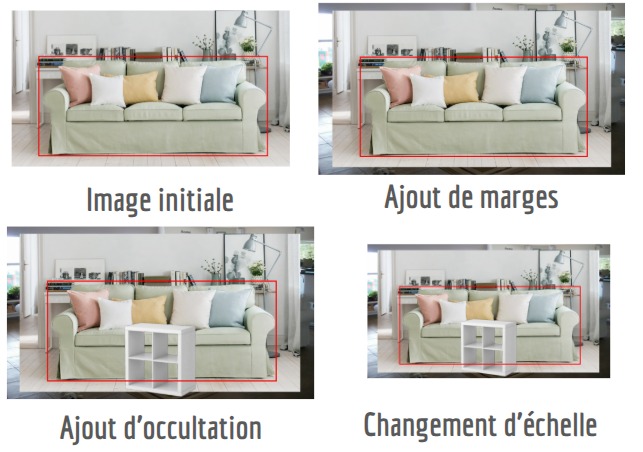

This paper introduces methods to quantify the relevance of 2D images in showcasing a 3D object, aiming to identify views that present essential characteristics regardless of texture. It proposes a deterministic relevance score based on geometric attributes and photographic recommendations, utilizing curvilinear saliency to extract features and filter irrelevant information. The paper also explores a learning-based approach using confidence scores from neural networks. Evaluation is performed using objective image rankings based on simulated degradations, demonstrating the deterministic method's efficiency and robustness, and providing insights into the behavior of learning-based methods.

|

|

To quantify an image relevance relative to a target 3D object

Marie Pelissier-Combescure, Géraldine Morin, Sylvie Chambon JNIM Journées nationales du GDR IM, 2023, Paris, France HAL /Poster This poster, presenting results that earned the best contribution award at GTMG 2022, details a method for quantifying the visual relevance of a 2D image in showcasing a 3D object. |

2022 |

|

|

Quelle image met le mieux en valeur un modèle 3D ?

Marie Pelissier-Combescure, Géraldine Morin Sylvie Chambon RFIAP Congrès Reconnaissance des Formes, Image, Apprentissage et Perception , 2022, Vannes, France HAL

This paper proposes a method to quantify how well a 2D image showcases a 3D object, ranking images based on a "relevance score" derived from object dominance, size, and curvilinear saliency information. It also compares this approach to confidence scores from neural networks. Validated using systematically degraded images, the deterministic method proves effective and robust, outperforming most deep learning models. These works have been accepted at SCIA 2023.

|

|

Représentation d'un objet 3D dans une image 2D : mesure de la qualité par apprentissage profond et par une approche déterministe

Marie Pelissier-Combescure, Géraldine Morin Sylvie Chambon GTMG Groupe de Travail en Modélisation Géométrique, 2022, Dijon, France HAL This work is a preliminary result of the main paper published at SCIA 2023, which is mentioned above. 🏅 Award for best contribution |

2021 |

|

|

Extraction et comparaison d'information saillante : Pose favorable et image 2D révélatrice d'un objet 3D

Marie Pelissier-Combescure, Géraldine Morin Sylvie Chambon ORASIS Journées francophones des jeunes chercheurs en vision par ordinateur, 2021, Revel, France HAL

This paper proposes methods to quantify the essential 3D object information present in a 2D image, distinguishing between the "favorability" of an object's pose and the "relevance" of the 2D image itself. The core idea is to extract and match salient features from both 2D images and their corresponding 3D models using a curvilinear saliency detector, which ensures high repeatability between modalities. This allows for ranking poses and images based on the amount of essential information they contain about the object. The paper presents initial results that are encouraging, although it identifies areas for improvement such as handling occlusions and ensuring all salient points in depth maps are detected.

|

Education |

|

|

|

This website is inspired from Jon Barron's. Last updated July 2025. |